Konvu has been named a Supply Chain Innovator in Latio's 2026 Application Security Market Report. Latio has recently become one of the most trusted independent voices in application security. Their recognition validates what we've been building: an exploitability engine that goes beyond reachability analysis, powered by a sophisticated agentic system that actually reasons about your environment.

"Konvu stands out by combining all aspects of reachability with AI-based prioritization, resulting in some of the most robust false-positive reduction on the market."

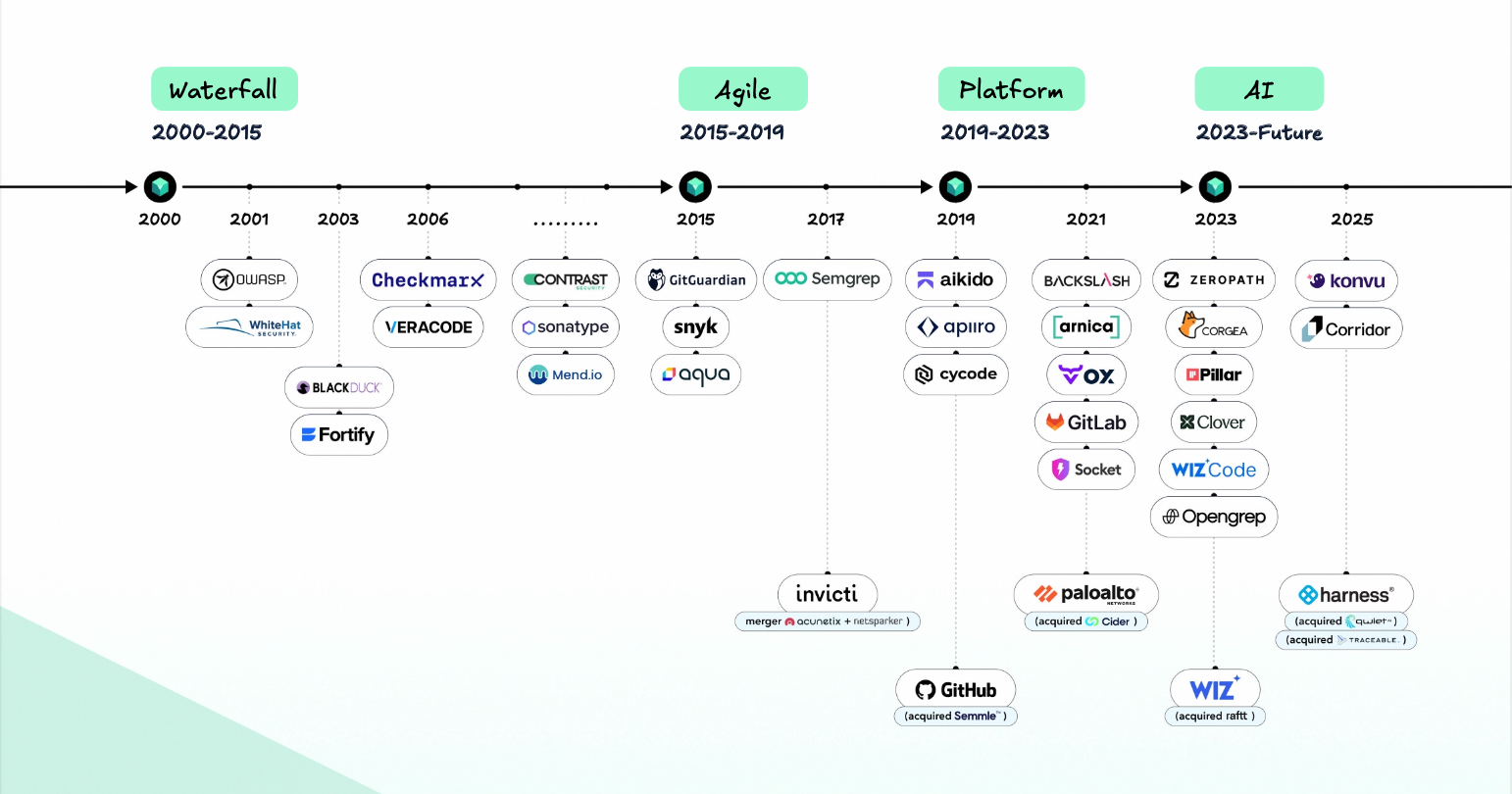

Four Eras of Application Security

Latio's report divides application security into four distinct eras, each responding to how software was built and deployed

Waterfall (2000-2015). Early application security served enterprises building large, compiled software on annual release cycles. Multi-hour scans ran against C and Java codebases. OWASP established best practices. Vendors like Fortify, Checkmarx, and Veracode built the foundational scanning engines still used for legacy systems today.

Agile (2015-2019). The shift to DevOps, cloud Git, and CI/CD changed everything. Dynamic languages exploded in adoption. Open source dependencies became central to how software was built. New players emerged to address these new workflows, bringing SCA and secrets scanning directly into developer pipelines. The "shift left" movement promised security at the speed of development.

Platform (2019-2023). As tools multiplied, consolidation became essential. Snyk expanded from SCA into containers, IaC, and SAST. New products emerged to orchestrate scanners and provide visibility across sprawling development environments. Code-to-cloud correlation became the defining feature.

AI (2023-Future). Vulnerability volume is soaring and time-to-exploit is shrinking. The platform play, consolidating scanners and correlating findings, was built for a different pace. Scaling AppSec to this era requires a different approach: one that helps teams triage at the speed of the threat and focus on what's actually exploitable. Konvu is listed in this era alongside other great companies building for that shift.

The report makes clear: we're in a period of transition. Traditional scanning still has a place, but AI is fundamentally changing both how vulnerabilities are created and how they're found.

Four Trends from the Report That Match What We're Building

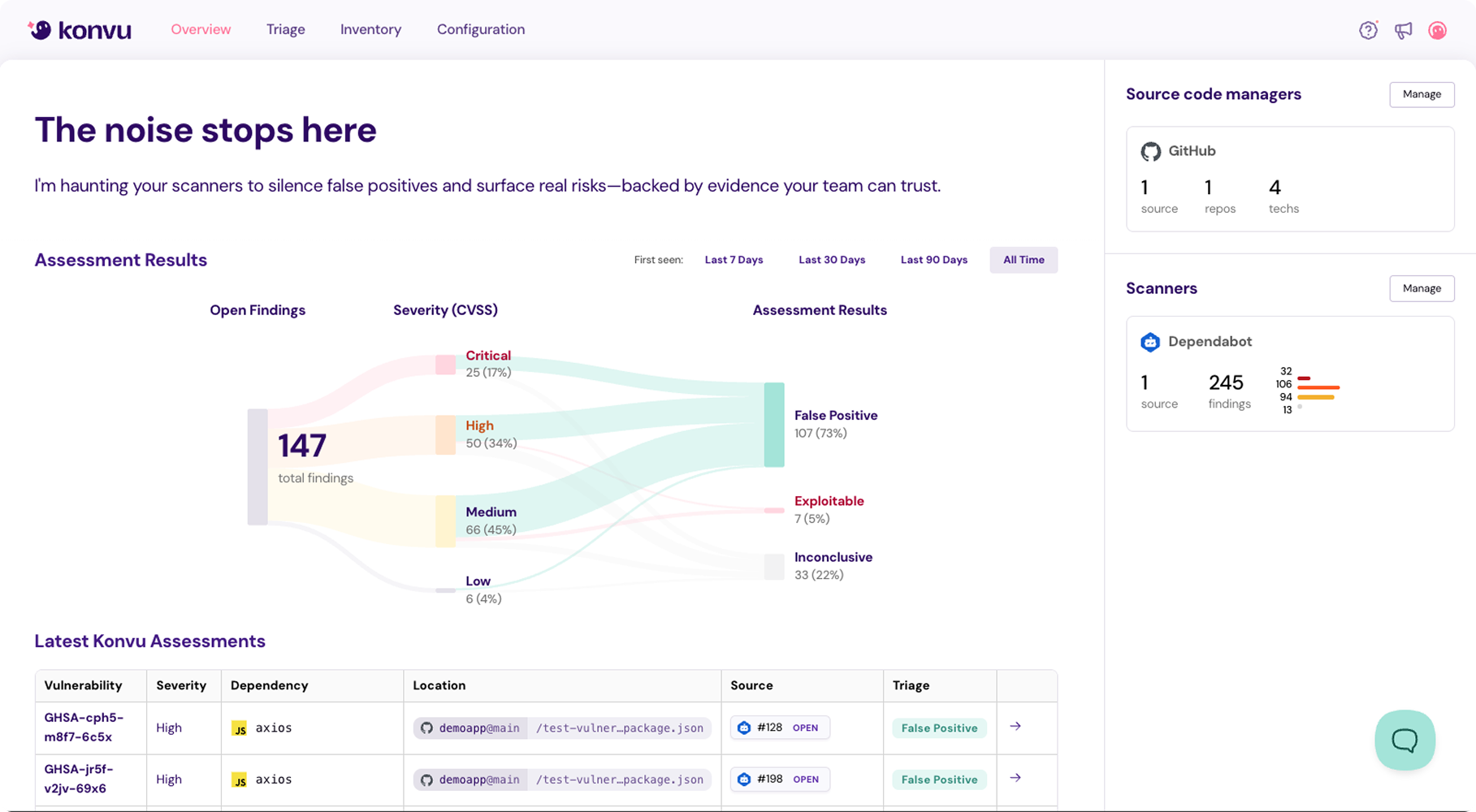

1. Noise is the #1 problem. The report frames Application Security teams as drowning in findings. Organizations need tools that determine which vulnerabilities are actually exploitable versus theoretically present.

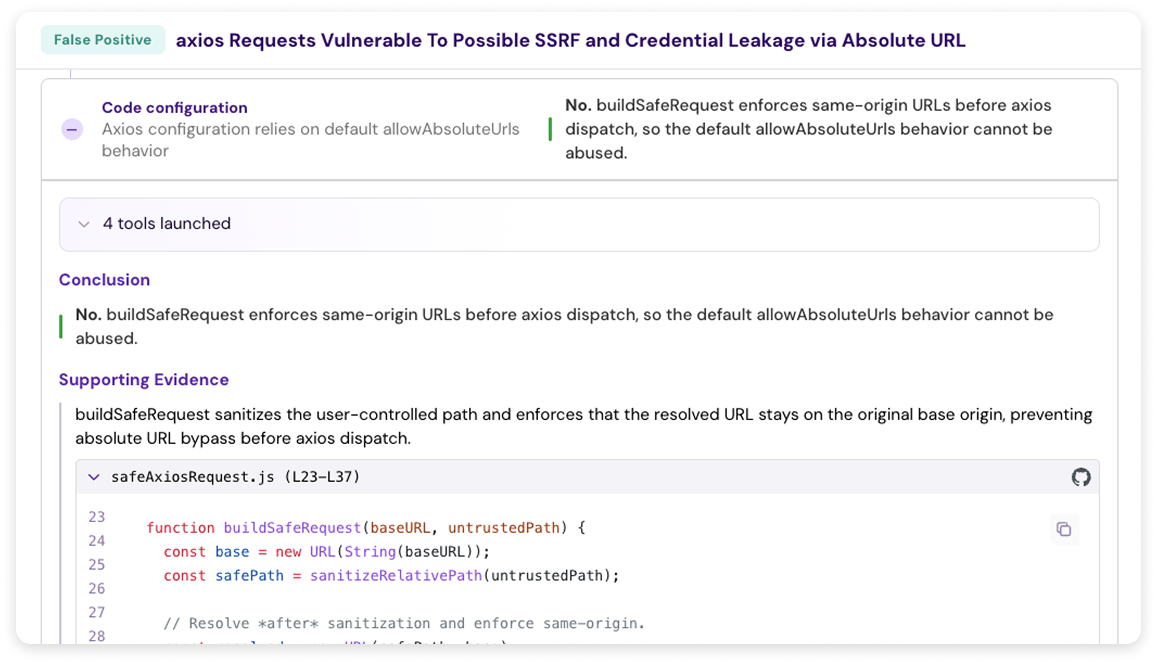

2. Reachability is table stakes; exploitability is the differentiator. Multiple vendors now offer reachability analysis. The report distinguishes between "is the code path callable?" and "can an attacker reach it in context?" The latter is where real risk reduction happens.

3. AI implementations are mostly shallow. Latio dedicates a section to AI and is honest: most implementations are autocomplete-level suggestions or basic summarization. The "sprinkle AI on it" approach isn't moving the needle.

4. Cross-scanner augmentation is validated. Enterprises don't want to rip and replace their existing scanners. They want an intelligence layer on top that enriches findings and orchestrates remediation across tools.

What Exploitability Analysis Actually Looks Like

Exploitability over reachability

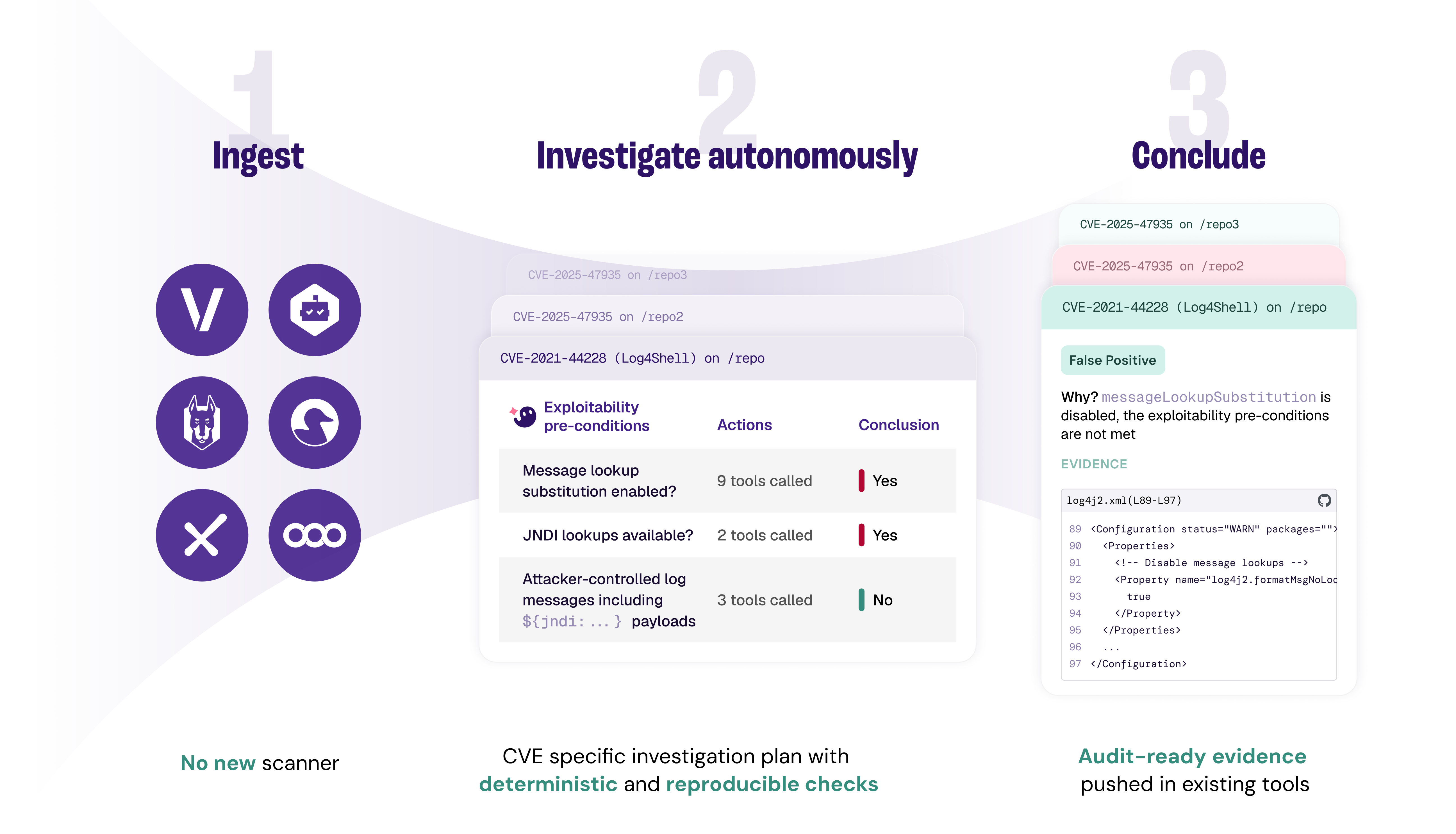

We model each CVE as a structured set of conditions, not a severity score. Our engine verifies each condition against your actual environment: code, configurations, and controls.

For example, with Log4Shell (CVE-2021-44228):

- Reachability says: "HTTP handler calls

logger.info()→ vulnerable" - Exploitability checks: Is

formatMsgNoLookups=true? IsJndiLookupclass present? Do attacker-controlled log sources exist?

Many services marked "reachable" become non-exploitable when you ask the right questions.

Agentic investigation, deterministic checks

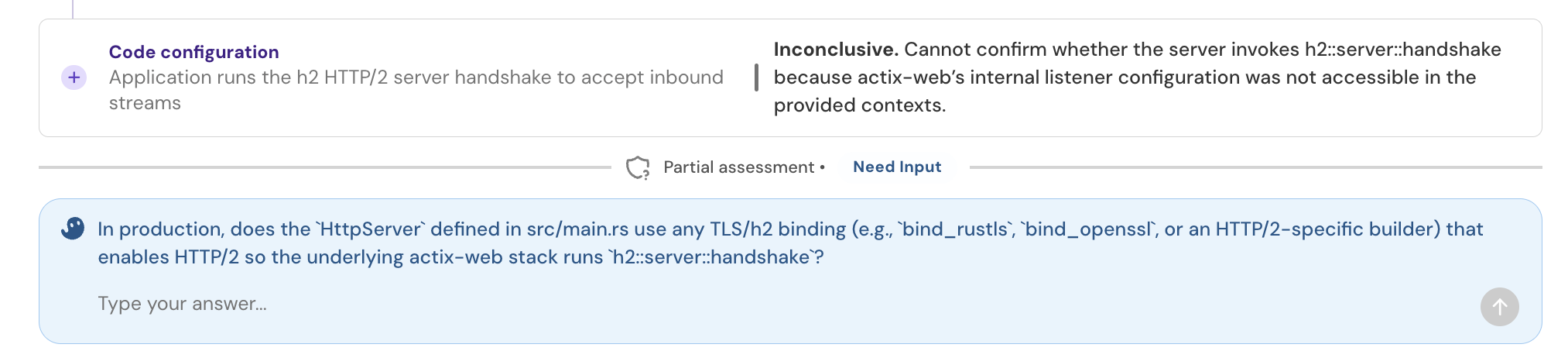

Our engine combines structured vulnerability intelligence: conditions extracted from advisories, exploit code, and security research with AI agents that verify each condition against your codebase. It's not an LLM summarizing a CVE. It's deterministic checks executed by agents that can read code and parse configurations.

When we have full context, the verdict is automatic. When we don't, we surface exactly what's missing so your team can make the call in minutes instead of hours.

Augmentation, not replacement

Konvu works alongside your existing scanners. We ingest findings from your current tools, add exploitability classifications with evidence, and push results back into your workflows. Your scanner investments stay intact you just stop drowning in their output.

Deployment That Fits Enterprise Reality

Managed or self-hosted

Two options depending on your security and compliance requirements:

Managed deployment runs via our GitHub App with read-only access to the repositories you choose. We analyze code and configurations in a secure, isolated environment; you get exploitability verdicts and evidence without shipping source off your perimeter. Ideal for teams that want to move fast and keep integration simple.

Self-hosted keeps your code and all analysis inside your infrastructure. We provide the same engine as a deployable service you run in your own environment—no code or findings ever leave your network. Built for regulated industries, air-gapped setups, or any organization that requires full control over where data lives.

Either way, setup takes minutes, not weeks.

Auditable, verifiable decisions

If you're dismissing findings, how do you know you're not missing real risks? Every verdict includes the full evidence chain: file paths, configuration values, and the reasoning behind each conclusion. Your team can verify any decision in minutes. When we lack the context to make a confident determination, we say so explicitly and tell you exactly what we need. The last mile is on you: provide that context—how a service is used, whether a feature is enabled, where a config lives—and we turn it into a clear, documented verdict instead of guessing.

This isn't a black box. It's auditable, reproducible, and designed for teams that need to defend their decisions to auditors and executives.

Evidence this works

At a $50B enterprise retailer with a mature security program, 93% of vulnerabilities flagged by their existing scanners didn't need fixing. The scanner was technically correct—the vulnerable code existed. But it wasn't exploitable in their environment.

That's thousands of engineering hours returned to building product instead of chasing theoretical risks.

Looking Ahead

Independent recognition from Latio signals that the problem we're solving is real and the approach is resonating with security teams who are tired of drowning in noise.

The full report is available here. If you want to see what exploitability analysis looks like on your backlog, reach out.