Enterprise security teams are caught between scanner noise and audit demands. Most assume they have to pick one. They don't.

The enterprise triage math is broken, and auditors know it

One of the largest industrial conglomerates in Europe reduced their vulnerability count by 62%, down from 53 million findings, and still only managed to triage 3% of what remained. If their government regulator audited them tomorrow for Cyber Resilience Act compliance, they'd fail. And they're not unusual.

A major US financial service company backed by a federal regulator told us they see 400+ alerts per vulnerability across their scanning tools. Every single result has to be triaged before it can be dismissed. That's their regulator's requirement, not a best practice. Scaling triage to match that output? Not happening with headcount alone. As their security lead put it: "It's 2026, you can't just throw more people at it"

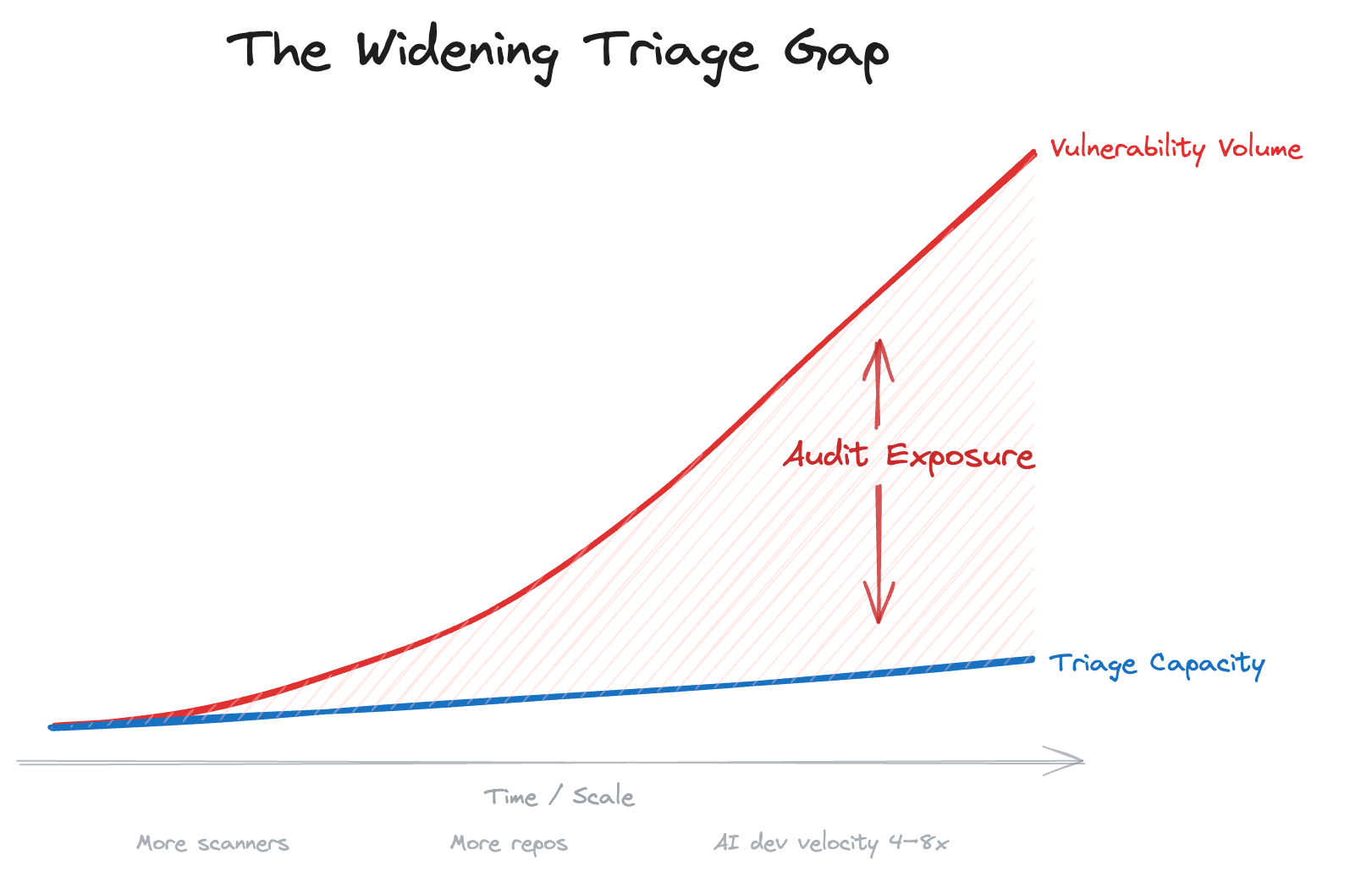

The math is simple and unforgiving:

- Vulnerability volumes grow with every new scanner, every new repo, every AI-assisted commit

- Triage capacity stays roughly flat, bounded by the number of security engineers you can hire and retain

- Remediation SLAs keep tightening: 1 day for critical, 8 days for high, 30 for medium

The gap between what scanners produce and what teams can investigate is widening every quarter. And it's widening faster now, because AI-augmented developers are shipping code at 4–8x the pace they were two years ago. As one platform security lead at a Fortune 500 SaaS company told us: "Our engineering velocity has gone four times more. In 2026, I'm expecting eight times. You have very little time for triage and investigation."

Three security engineers servicing 250 developers is a real ratio we've heard. It only works if triage is almost entirely automated. For most enterprises, it isn't.

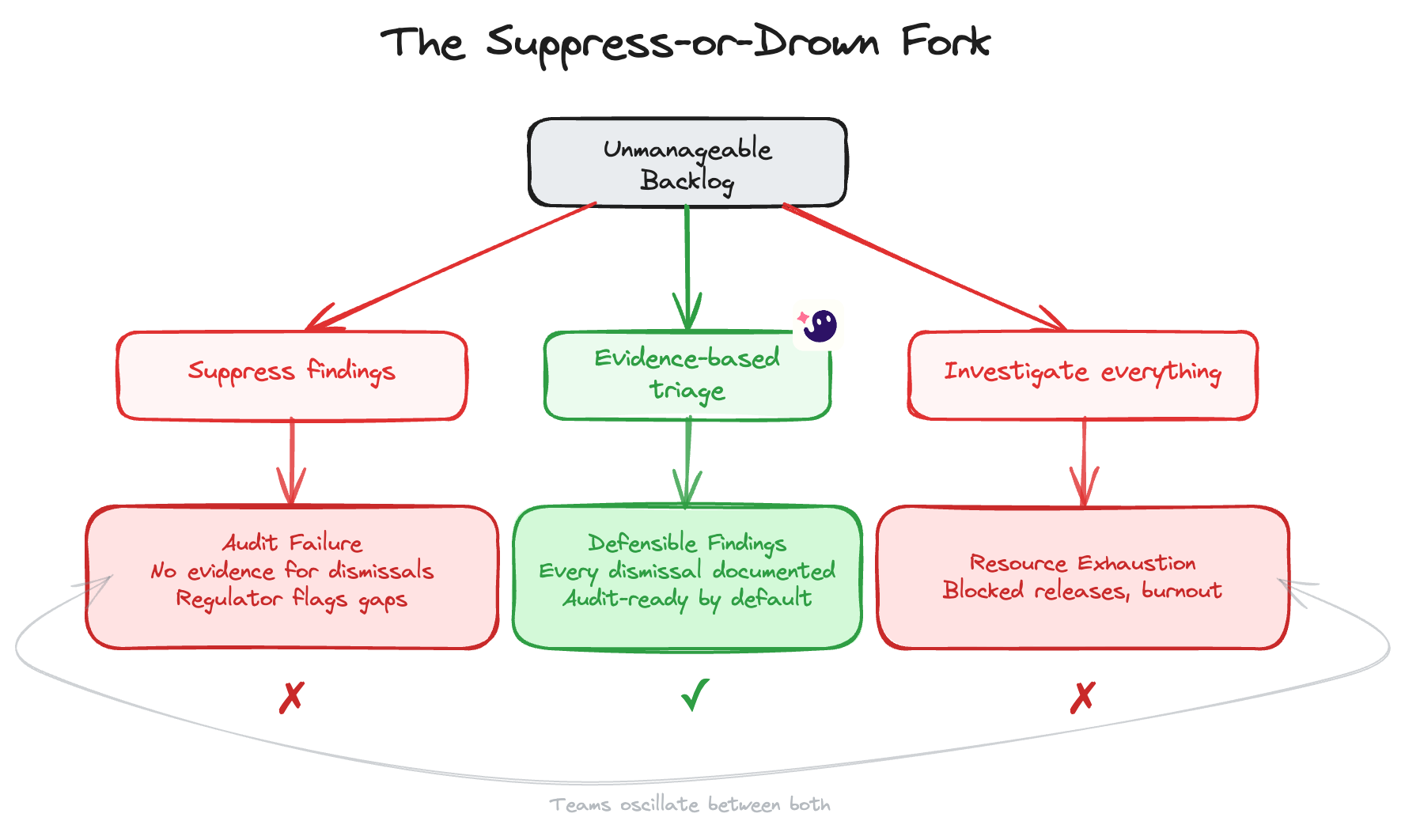

Why suppressing vulnerabilities fails audit

When the backlog becomes unmanageable, teams do what's practical: they suppress. Bulk-close low and medium findings. Accept risk on transitive dependencies. Create blanket exceptions by scanner or severity tier.

It works until the auditor shows up.

Regulators are getting more specific about what they expect. They often require defendable logic for every automated vulnerability dismissal. Not "we suppressed it", but why you suppressed it, with evidence. The EU Cyber Resilience Act is making uninvestigated backlogs a regulatory liability in Europe.

The pattern across regulated enterprises is consistent:

- You can't ignore findings. Auditors want proof you looked at them.

- You can't bulk-suppress. Regulators want individual reasoning.

- You can't rely on CVSS alone. A 9.8 that's not exploitable in your code is not the same as a 9.8 that is. Risk-based prioritization requires actual exploitability context. This is especially true when findings come from both SCA and SAST tools, which flag different types of issues with different severity logic. (We unpack this in our SCA vs SAST comparison.)

- You can't just hire more analysts. The economics don't scale.

So teams end up in the worst of both worlds: spending enormous resources on manual triage that's still too slow to satisfy compliance timelines, while paying external consultants to cover the gap. One subsidiary of a global beverage conglomerate admitted they "burn so much money and resources for consulting services just for triaging."

Vulnerability triage bottlenecks create compliance gaps

Most security leaders frame their problem as "too many vulnerabilities." The actual compliance risk is more specific: insufficient evidence that findings were properly evaluated.

There's a meaningful difference between:

- "We have 50,000 open findings" is a backlog problem.

- "We can't demonstrate why we dismissed 47,000 of them" is an audit problem.

The second one is what regulators care about. And it's where most enterprises are quietly exposed.

Consider what happens at a regulated bank when vulnerabilities appear in production. When builds break on high-severity findings and the security team isn't available for triage at nights or weekends, releases stall. Developers push back. Friction between engineering and security compounds.

At another enterprise, vulnerability policies that block production releases without resolution created "frictions" that escalated to executive attention. The tension isn't theoretical, it's operational, and it happens every sprint cycle.

The core issue: scanners tell you what exists. They don't tell you whether it matters. A mid-market security leader was blunter: "Most of what you get is just 'I found a package. This package has a vulnerability. Your context? No. That doesn't matter. It's vulnerable. That's all we tell you.'"

Without that context layer, every finding requires the same manual investigation. And when you can't investigate fast enough, the backlog becomes the compliance gap.

What audit-ready noise reduction looks like

The instinct is to treat noise reduction and audit readiness as opposing forces. Cut alerts and you lose the paper trail. Keep the paper trail and you drown in alerts. But they're only in conflict when dismissals are opaque.

That's where evidence-based triage comes in: automated analysis that doesn't just classify a finding as "not exploitable" but documents why, with the full chain of reasoning an auditor would need to verify the decision.

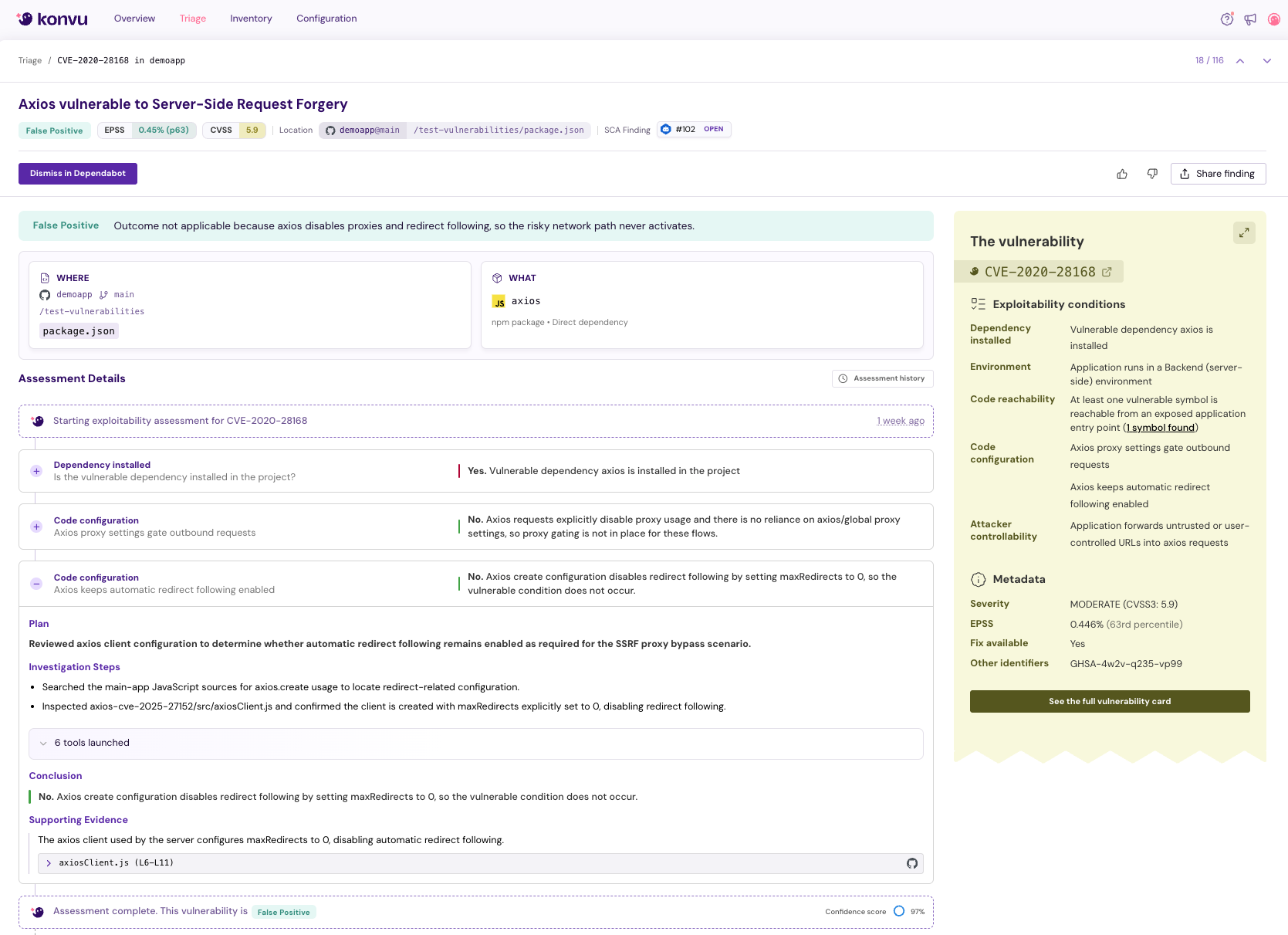

This is the approach we've built at Konvu. Our AI agents investigate each vulnerability the way a senior security engineer would: tracing code paths, evaluating whether attacker-controlled input can actually reach the vulnerable function, checking configuration and optional runtime context, and producing a documented investigation trail for every finding. Not a severity score. An evidence-backed conclusion.

In practice, this means findings that aren't exploitable in your specific codebase get dismissed automatically, with full reasoning attached. Your security analysts stop spending their days on findings that need labor and start spending them on findings that need judgment.

Every dismissal carries a retrievable investigation: the analysis steps, the code paths examined, the conclusion reached. When a regulator asks "why did you discard this?", the answer isn't a suppression rule. It's a documented assessment.

Developers benefit too. Fewer false-positive tickets means fewer blocked releases. And when a finding does reach an engineer, it comes with context on why it matters and what to fix, not just a CVE number and a severity score.

One customer called it "almost like someone giving me a present, an individual vulnerability and why it is [or isn't exploitable]." Another appreciated that when the analysis is inconclusive, it says so explicitly: "I appreciate that inconclusive does not skew it towards yes or towards no." That honesty builds trust with your team and with your auditors.

The goal isn't zero findings. It's defensible findings: every open vulnerability has either been remediated or has documented evidence explaining why it doesn't require immediate action.

Takeaways

The vulnerability noise problem and the audit compliance problem aren't separate challenges. They're the same challenge viewed from different angles. Noise exists because scanners lack context. Audit gaps exist because teams can't investigate at the volume scanners produce.

Solve for context, real exploitability analysis with documented evidence, and both problems collapse.

Three principles for security leaders navigating this:

-

Audit-readiness is a triage design problem, not a documentation problem. If your triage process produces evidence by default, compliance follows naturally. If it doesn't, no amount of after-the-fact reporting will close the gap.

-

Suppression without reasoning is technical debt you'll pay back with interest. Every bulk-closed finding without documented justification is a line item an auditor can flag. The faster approach in Q1 becomes the remediation project in Q4.

-

Automation must show its work. AI-powered triage is the only way to match the scale of modern vulnerability output. But the AI's conclusion is only as valuable as the evidence trail behind it. Invest in systems that explain, not just classify.

The enterprises getting this right aren't choosing between speed and compliance. They're building triage systems where every automated decision carries the evidence to defend it.

Want to see what evidence-based triage looks like for your codebase? Book a demo and we'll walk through a real investigation on your vulnerabilities.